¼ dollar – United States

Add to wishlist

United States

Context

Years: 1804–1807

Issuer: United States

Period:

(since 1776)

Currency:

(since 1785)

Subdivision: ¼ dollar = 25 Cents

Total mintage: 348,775

Material

References

KM: #

Numista: #30701

Value

Exchange value: ¼ USD = $0.25

Bullion value: $15.07

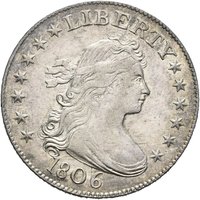

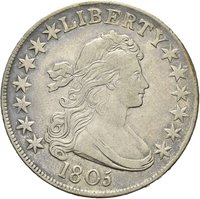

Obverse

Description:

Draped bust right, 13 stars.

Inscription:

LIBERTY

1806

1806

Script: Latin

Engraver: Robert Scot

Designer: Gilbert Stuart

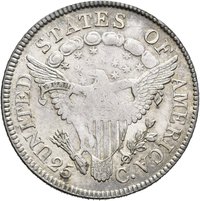

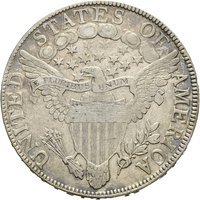

Reverse

Description:

Majestic heraldic eagle

Inscription:

UNITED STATES OF AMERICA

E PLURIBUS UNUM

25 C.

E PLURIBUS UNUM

25 C.

Script: Latin

Engraver: Robert Scot

Designer: Gilbert Stuart

Edge

Reeded

Mints

| Name | Mark |

|---|---|

| United States Mint of Philadelphia | — |

Mintings

| Year | Mint Mark | Mintage | Quality | Collection |

|---|---|---|---|---|

| 1804 | — | 6,738 | ||

| 1805 | — | 121,394 | ||

| 1806 | — | — | ||

| 1807 | — | 220,643 |

Historical background

In 1804, the United States operated under a bimetallic monetary system established by the Coinage Act of 1792. This law defined the U.S. dollar in terms of both gold and silver, setting a fixed exchange ratio of 15-to-1 (15 ounces of silver equaled 1 ounce of gold). The primary coins in circulation were the gold eagle ($10), half eagle ($5), and quarter eagle ($2.50), alongside silver dollars, half-dollars, quarters, dimes, and half-dimes, all minted by the fledgling U.S. Mint in Philadelphia. However, a critical problem plagued this system: the official mint ratio undervalued gold relative to world markets, causing gold coins to be exported or melted down for bullion, leaving silver as the dominant circulating coinage.

Despite the law, the everyday currency landscape for most Americans was one of chronic scarcity and confusion. The Mint's output was limited, and much of its silver coinage, like the iconic 1804 Silver Dollar (which was actually minted decades later for diplomatic purposes), did not enter general circulation. Instead, people relied on a jumble of foreign coins—primarily Spanish milled dollars and fractional "bits"—alongside banknotes of uncertain value issued by private state-chartered banks. This patchwork system created significant challenges for interstate trade and economic stability, as the value and acceptability of money varied greatly from one region to another.

The situation was further complicated by the recent dissolution of the First Bank of the United States, whose charter had expired in 1811. The Bank had served to regulate state bank note issuance to some degree, and its absence contributed to a less disciplined environment. Consequently, while the federal government defined the legal monetary standard, the practical reality in 1804 was a fragmented and often unreliable currency supply, setting the stage for future financial instability and ongoing debates about centralized banking that would culminate in the creation of the Second Bank of the United States in 1816.

Despite the law, the everyday currency landscape for most Americans was one of chronic scarcity and confusion. The Mint's output was limited, and much of its silver coinage, like the iconic 1804 Silver Dollar (which was actually minted decades later for diplomatic purposes), did not enter general circulation. Instead, people relied on a jumble of foreign coins—primarily Spanish milled dollars and fractional "bits"—alongside banknotes of uncertain value issued by private state-chartered banks. This patchwork system created significant challenges for interstate trade and economic stability, as the value and acceptability of money varied greatly from one region to another.

The situation was further complicated by the recent dissolution of the First Bank of the United States, whose charter had expired in 1811. The Bank had served to regulate state bank note issuance to some degree, and its absence contributed to a less disciplined environment. Consequently, while the federal government defined the legal monetary standard, the practical reality in 1804 was a fragmented and often unreliable currency supply, setting the stage for future financial instability and ongoing debates about centralized banking that would culminate in the creation of the Second Bank of the United States in 1816.

⭐ Somewhat Rare