5 cents – United States

Add to wishlist

United States

Context

Material

References

KM: #

Numista: #25428

Value

Exchange value: 0.05 USD = $0.05

Bullion value: $3.08

Obverse

Description:

Female bust left, hair flowing.

Inscription:

LIB . PAR . OF SCIENCE & INDUSTRY .

1792

1792

Script: Latin

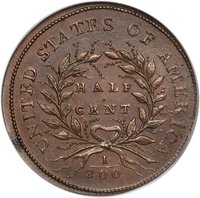

Reverse

Edge

Diagonally reeded

Mints

| Name | Mark |

|---|---|

| United States Mint of Philadelphia | — |

Mintings

| Year | Mint Mark | Mintage | Quality | Collection |

|---|---|---|---|---|

| 1792 | — | 1,500 |

Historical background

In 1792, the United States faced the critical task of establishing a stable and unified monetary system to replace the chaotic patchwork of foreign coins, state-issued bills, and Continental currency that had plagued the nation since the Revolution. The new federal government, empowered by the Constitution which explicitly granted Congress the power to coin money, sought to create a currency that would foster commerce, ensure public credit, and symbolize national sovereignty. Secretary of the Treasury Alexander Hamilton was the primary architect, submitting his seminal "Report on the Establishment of a Mint" in 1791, which laid the groundwork for a decimal-based system rooted in precious metals.

This effort culminated in the Coinage Act of 1792, signed into law by President George Washington on April 2. The act created the United States Mint in Philadelphia and defined the nation's currency unit, the dollar, based on a bimetallic standard. It precisely fixed the gold-to-silver ratio at 15:1, establishing that a gold "eagle" ($10) contained 247.5 grains of pure gold, while a silver dollar contained 371.25 grains of pure silver. The law also created a hierarchy of smaller denomination coins, from half-dollars down to half-dimes and coppers (cents and half-cents), to facilitate everyday transactions.

Despite this ambitious framework, the currency situation in 1792 remained more theoretical than practical. The Mint was slow to begin full-scale production, and for years foreign coins—especially Spanish silver dollars and their fractional parts—continued to dominate circulation. Furthermore, the fixed 15:1 mint ratio soon proved problematic, as the global market value of silver rose relative to gold, causing silver dollars to be exported or melted down for bullion. Thus, while the 1792 law provided the essential foundation for American monetary policy, achieving a stable and sufficient circulating coinage would be a persistent challenge for the young republic well into the following decades.

This effort culminated in the Coinage Act of 1792, signed into law by President George Washington on April 2. The act created the United States Mint in Philadelphia and defined the nation's currency unit, the dollar, based on a bimetallic standard. It precisely fixed the gold-to-silver ratio at 15:1, establishing that a gold "eagle" ($10) contained 247.5 grains of pure gold, while a silver dollar contained 371.25 grains of pure silver. The law also created a hierarchy of smaller denomination coins, from half-dollars down to half-dimes and coppers (cents and half-cents), to facilitate everyday transactions.

Despite this ambitious framework, the currency situation in 1792 remained more theoretical than practical. The Mint was slow to begin full-scale production, and for years foreign coins—especially Spanish silver dollars and their fractional parts—continued to dominate circulation. Furthermore, the fixed 15:1 mint ratio soon proved problematic, as the global market value of silver rose relative to gold, causing silver dollars to be exported or melted down for bullion. Thus, while the 1792 law provided the essential foundation for American monetary policy, achieving a stable and sufficient circulating coinage would be a persistent challenge for the young republic well into the following decades.

💎 Extremely Rare